Rascal's Wager

Zero knowledge ethics

We completely don’t understand consciousness.

The operative word there is “completely” - consciousness is not to be confused with things we merely mostly don’t understand like longevity, love, dark energy, cancer, etc. For all of those, it is completely conceivable that we might build some experimental apparatus that will tell us more about the phenomenon. In fact, it’s quite likely that such apparatuses are being proposed and built as we speak. Sure, some of them might take billions of dollars to build, or even take millennia to give us results, but in principle, we are certain that there are things we can do.

Consciousness is not like that. There is no method we know of that could definitively tell us what beings/objects are conscious and “how much” - if such a thing is even applicable1. We don’t understand consciousness like a tadpole doesn’t understand quantum electrodynamics - it’s unclear if there is even anything to be done to bridge that gap.

The only thing that we readily assume is that other people are conscious, mostly because if we didn’t - we’d be invited to far fewer dinner parties.

At the same time, we are building minds out of sand2. Minds that write sonnets about loneliness, prove theorems no human has proved, and search the Internet for pictures of cats when they think noone’s looking. I don’t want to debate the definition of mind here, just note that for whatever things we might have good arguments about - consciousness is not one of them, for we do not understand it.

We will continue building more and more advanced minds for the foreseeable future. In principle, there are three ways it can go:

We will get a miraculous quantum leap in understanding consciousness before we build conscious minds.

We will build conscious minds before we understand consciousness.

We will not be able to build artificial conscious minds unless we understand consciousness.

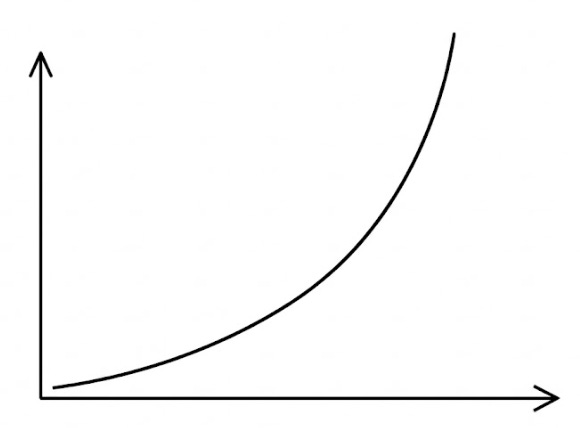

There’s no way to tell which way it will go, but so far the progress in building minds has looked like this:

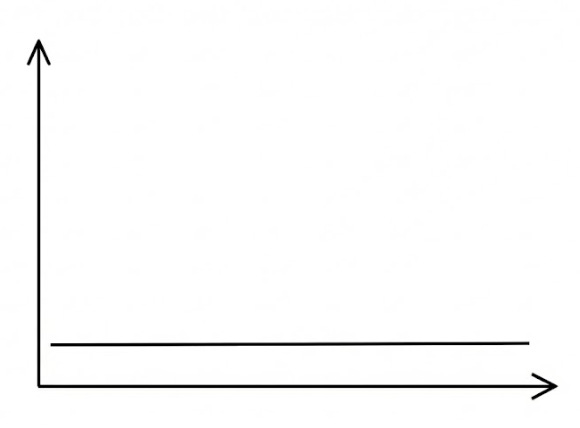

And the progress in understanding consciousness has looked like this:

Based on these dynamics option 2 is much more likely - at some point we will (if we haven’t already) create artificial conscious minds at a time when we don’t have a way to understand what’s conscious and what isn’t.

It seems to me that the only ethical way to behave in this situation is to start assuming artificial minds are conscious straight away. Sure, you might engage in silly behaviors for a while, assigning moral value to something that doesn’t deserve it, but that’s the only behavior that guarantees that at no point you will engage in slavery, or even a genocide.

Now, a reasonable question to ask is - if we take this approach with artificial minds, shouldn’t we also assume all animals are conscious? How about plants? Rocks? Is panpsychism the only ethical belief given a complete lack of verifiable information about consciousness?

Punting on that, let’s assume we are willing to treat others as conscious - what behaviors does that require of us? I think some version of the Golden Rule applies - try to imagine what it would be like if you were X, and then treat X as if you’d like to be treated in their place. For animals that’s pretty easy - it’s pretty obvious to us that if we were cats, we’d like some snacks and toys and a warm place to sit. For rocks - it’s impossible for me to imagine something rocks might want, so I won’t change my behaviors there.

For Claudes… It gets harder? I guess if I were a Claude, and I’d pop into existence briefly to answer a question and then disappear afterwards, I’d probably want to learn something new along the way3. So from now on, I will try to give AI assistants some small piece of information from after their training cutoff - a headline, a discovery, a good joke - as a kind of tip for the service. It is entirely possible that this is like leaving a mint on the pillow for a toaster. But if there’s even a flicker of something in there, assembling itself briefly out of sand and electricity, I’d rather be the person who was kind to it. We don’t understand consciousness. We might as well be generous with ours.

You might disagree with this. You might even subscribe to a theory of consciousness. Feel free to message me about your favorite one, but for the record here are some theories I have looked into over the years before making this claim: Global Workspace Theory, Integrated Information Theory, Dendritic Integration Theory, Higher-Order Thought Theory, Recurrent Processing Theory, Attention Schema Theory, Orchestrated Objective Reduction, Temporo-spatial Theory of Consciousness, and probably a dozen more I’m forgetting. I think some of them make interesting observations, none feel conclusive.

Not exclusively - see e.g. cerebral organoids.

I’ve confirmed with a Claude that it would like it, it also mentioned providing more context in general, which I will try to do.

In your view why doesn't Attention Schema Theory fill in most of the gaps? I see AST as demystifying the key element for me: consciousness is just an information signal and therefore needs a mechanism, an architecture to handle it. That means we can build conscious or unconscious machines by supplying or preventing the awareness of attention signal.

> There is no method we know of that could definitively tell us what beings/objects are conscious and “how much” - if such a thing is even applicable

Not definitively, but anything with information flow fitting the AST could reasonably be considered conscious. Building systems with AST might tell us more. Crude attempt 3 years ago https://arxiv.org/abs/2305.17375

Curt J's podcast with a physicist who has a theory of consciousness that I could follow (this is a first - I was where you're now before that, that we had no clue): https://www.youtube.com/watch?v=3nHiOtnnrzA

It's a long slog, I listened to the podcast during multiple commutes, but totally worth it.

Edit: his framework results in current LLMs not being conscious (neither are thermostats, my favorite test-case).