How The Hell Do We Link Our Brains To Computers? (Part 1 of x)

Intro to BCIs. Evaluating technologies. Brief overview of the most common ones.

There are three directions of technological development that I follow very closely:

Artificial Intelligence.

Synthetic Biology.

Brain-Computer Interfaces.

Artificial Intelligence has been scraped clean in terms of discourse. While 5 years ago you could talk about the scaling hypothesis, today we are firmly in Grimes AI-Powered Communism territory. Cool.

Synthetic biology does not get nearly enough attention, but the industry has matured significantly in the last few years. A few important companies are already public, and you can find a lot of info in their investor presentations/SEC Filings ($AMRS, $TWST, $ZY, $SRNG - soon to be $DNA). There are reasonable industry publications like SynBioBeta.

Brain-computer interfaces (BCIs, also known as Brain-Machine Interfaces, aka BMIs) do not get nearly enough thoughtful commentary. The field got some press when known attention hog Elon Musk got into it by funding Neuralink (which we’ll get to), but the most interesting projects and technologies in the field are virtually unseen by outsiders.

This blog series will cover the history, principles, current state of the art, and future directions, as well as specific approaches and labs to keep an eye on.

Your brain has billions of neurons. The images you see, the sounds you hear, the thoughts you have are all encoded by those neurons firing1. When a neuron fires, it sends a message to neurons it’s connected to. The firing involves sending an electrical signal. We understand electricity pretty well, so, if we can access a neuron, we can perform I/O operations - read from neurons and write to them (reading is frequently described as recording, and writing as stimulating). Ostensibly, if you access EVERY neuron in my brain and advance your understanding of neurons sufficiently, you can read any thought I am having, as well as implant thoughts in my brain2.

We are quite far from that. Let’s say that there are 4 stages of development of BCIs.

Stage 1. We can record aggregates of large-scale neural activity. We can also stimulate neurons that are located in a particular brain area.

Stage 2. We can implement basic if-then clauses in the brain. If a relatively small group of neurons experiences a lot of firing, we stimulate another relatively small group of neurons.

Stage 3. General-Purpose BCI device. It can read and write to a lot of different areas of the brain at once at a decent resolution. It can be used to reconstruct crude versions of what a person is seeing and hearing. It can be used to send text messages between brains. It can also be used to increase or decrease various specific emotions.

Stage 4. Ultimate BCI. It can read and write to such a large number of neurons at the same time that we can have a completely predictive model of every single neuron in a human brain. We’re not even sure what we are going to be able to do with such a device, but things like memory transfer, consciousness merging, and mind backups are all on the table. This is sci-fi territory right now, if you are interested in reading fiction about it, check out the Nexus trilogy by Ramez Naam.

Moreover, there are 3 separate tracks that BCIs are on - non-invasive, minimally invasive, and fully invasive.

Non-invasive BCIs do not require any kind of medical procedure to use. For example, they can be helmets that you can take on and off.

Minimally invasive BCIs require a medical procedure but do not require full-scale brain surgery. They might require an injection of tiny objects into your blood that will make their own way to your brain.

Fully invasive BCIs are only implanted using brain surgery.

Right now, non-invasive BCIs are at Stage 1, fully invasive BCIs are getting close to Stage 2, and minimally invasive BCIs are in very early stages of development.

The borders between the categories can be blurry (is an injection directly into the brain minimally invasive or fully invasive?), but in general there is a tradeoff between how invasive a BCI is and how precise/powerful it is. Speaking about evaluating BCIs, the stages are more talking points than precisely defined milestones. Here are some of the categories we use to evaluate BCIs in addition to the level of invasiveness:

Level of precision. Can we read/write to individual neurons3 or only large parts of the brain? What’s the temporal resolution?

Reach. Can we access large portions of the brain or just a small area?

Data quality. Data science metrics like precision/recall are applicable. Can we reliably detect every single time a neuron fires? Do we ever get false positives?

Data transmission rate. How much information can we send in and out of the brain every second?

Replicability. How similar does the data look for different people4? Can you still get data from the brain reliably when a person is moving?

Processing power. It’s frequently not feasible to send information about every single event outside of the brain. Thus we might need to do some data-preprocessing directly in the body.

Power requirements. Does the BCI require a power source? What kind? Does it have to be implanted in the body, or can we beam energy wirelessly?

Installation precision. Can we install the BCI precisely, or is it more of a spray and pray deal?

Safety & Longevity. Is the installation procedure safe? Is removal possible? Perhaps it naturally degrades over time? How long does it last? What happens after it stops functioning?

Cost & Manufacturing difficulty.

And, of course, there is every practitioner's favorite dimension - how good is the evidence that the BCI can actually achieve its stated specs. The field has seen everything from mild exaggeration to outright lies5.

As you can imagine, it is heinously difficult to make a system that does well across all these different dimensions. Let’s take a look at how things have gone so far.

The handsome devil pictured above is Hans Berger. As was customary for young men in the late 19th century, he once had a near-death encounter that involved multiple horses and a cannon. At the same time, his sister had a feeling that something was wrong with Hans and sent him a telegram. That case of potential telepathy impressed him so much that he set off on an epic quest to transmit thoughts. He didn’t get quite that far, but he did invent EEG, which was the first step towards BCIs.

In fact, EEG is still used today! It involves placing some electrodes on the scalp and recording electrical activity from it. EEG is objectively not very good, but it is safe, cheap, and easy to mold into a futuristic-looking shape.

Here’s a recent study that shows that people hospitalized with COVID-19 have abnormalities in their EEG data. Good to know, I guess? EEG data is not super actionable, but it is technically a BCI, so startups using EEG continually seduce investors and raise money.

Moving on with our history, the first time the idea to hook up brains to computers was properly explored was in 1973. The exploration was funded by, you guessed it, DARPA. It is incredible how every time I examine a cool new technological field, there’s a DARPA program in its past that really kicked things into high gear.

Around the same time, MRI was being conceived, with a full-body MRI machine being completed in 1977. MRI machines have been the workhorses of brain imaging for many decades and they can still be found in every self-respecting hospital. The current version of MRI used for brain imaging is called fMRI. It tracks blood flow in the brain, which correlates with neural activity. It’s been around since the 1990s.

Unlike EEG, fMRI gives you a full 3D map of the brain. The resolution isn’t fantastic, and it’s fairly slow. But it has allowed us to come up with data points like “when a person looks at images of spiders, a certain part of the brain called the amygdala has a lot of activity”. So now we know in broad strokes what most6 parts of the brain do. That being said, the list of activities you can engage in inside of an fMRI machine is limited. Imaging brains during orgasms is possible, if uncomfortable. But you can’t play soccer or experience freefall in an fMRI machine.

Magnetoencephalography (MEG) is a technique comparable to MRI (also requires a huge machine). Invented in the late 60s, it measures the changes in the magnetic field caused by neurons firing. Since, unlike fMRI, it doesn’t need to wait for blood flow to detect signals, it achieves a high temporal resolution (under 1 ms). That allows us to use it for trying to decode speech7, for example. However, MEG is quite difficult to set up. The magnetic signal from the brain is so weak that it can be distorted by Earth’s magnetic field. So, to use MEG, you need to build magnetically shielded rooms, with walls made out of aluminum and what not.

Functional near-infrared spectroscopy (fNIRS) is a method that’s been gaining in popularity recently. For complex reasons that involve quantum mechanics, light in the near-infrared spectrum doesn’t get attenuated very much by molecules, which allows us to look fairly deeply into biological samples. fNIRS detects the same type of signal as fMRI. The two advantages of fNIRS are that the devices are cheaper (tens of thousands of dollars vs hundreds of thousands for fMRI) and that they are portable. That allows us to use them for research that we cannot conduct in fMRI machines, for example, social neuroscience.

However, fNIRS doesn’t penetrate as deep into the brain as fMRI does. You can use it to examine the neocortex, but deeper structures are out of reach for this technology.

We can get extremely high-resolution data from the brain if we just cut the skull open. The most common technique (first developed in the 1950s) is known as electrocorticography (ECoG), and it involves placing electrodes right on top of the neocortex. The main advantage of invasive procedures is that you can record from individual neurons. However, we can only place electrodes on small parts of the brain, so we can’t get full-brain data. Invasive procedures for recording brain activity are rarely used in medicine (only for epilepsy AFAIK), but they are very popular in research labs. One of the most cyberpunk experimental setups I’ve heard of involves having a mouse run in place on a ball, while wearing VR goggles, and having electrodes permanently implanted in its brain. That allows scientists to use techniques that require the head to be stationary while studying navigation. You can see a schematic of the setup in Figure 1 of this paper.

On the stimulation side, the pickings are a lot slimmer. The most common technique is called tDCS8 (transcranial direct current stimulation) and it involves placing electrodes on the skull and zapping it. tDCS works… A bit? My best impression of the technology is that it works sometimes, there are no guarantees, and effectiveness diminishes drastically if you don’t put electrodes in precisely the right spot. That being said, transcranial stimulation is used in some medical centers, as well as by hobbyists. Transcranial stimulation is safe, cheap, imprecise, and poorly understood.

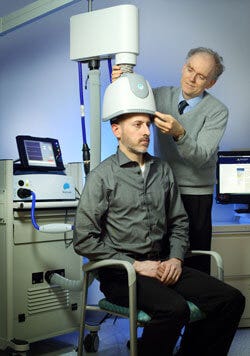

You can also stimulate the brain noninvasively with magnets. The technique is called, you guessed it, Transcranial Magnetic Stimulation (TMS). You can’t do it at home, it’s a lot more expensive than tDCS, but it is more precise.

Both tDCS and TMS are used for treating depression and are being investigated for things like chronic pain, addiction, etc.

Of course, we can also stimulate neurons directly if we are ready to do surgery. The practice started before we really knew what different parts of the brain did. If you’re wondering how it worked, well, it was a little scary. If you had epilepsy, a surgeon would cut your skull open using local(!) anaesthesia, stimulate different parts of it until he found some neurons, stimulating which triggered seizures, and then destroy them. It was called the Montreal Procedure.

Nowadays, we know reasonably well what different parts of the brain do, so we implant devices that stimulate them continuously as a therapy. The practice is called Deep Brain Stimulation (DBS), and it was officially invented in 1987. It’s since been approved by the FDA for treating a few serious conditions like Parkinson’s, dystonia, OCD, and epilepsy. As of right now, the approved devices don’t incorporate any intelligence - they just continuously stimulate the area where they are implanted. DBS is quite risky. Brain surgery itself can cause hemorrhages and infection, and the stimulation can also produce side effects ranging from headaches to hallucinations and hypersexuality.

People have tried combining recording and stimulating technologies. Scientists have tried to analyze brains with fMRI, EEG, fNIRS, and then stimulate them using tDCS or TMS. The process is called neurofeedback. It’s been studied A LOT, but there haven’t been any convincing successes in the field. A 2016 review of neurofeedback calls out “methodological limitations and clinical ambiguities”. While there is no doubt that eventually reading from the brain will allow us to make highly targeted interventions with stimulation, we are simply not there yet.

That concludes an overview of widespread BCI technologies. As you may have noticed, none of them are very good. They are all firmly in Stage 1 of BCI development.

In Part 2, I will plunge into more exciting technologies, which are still in development, and some of which are achieving Stage 2 in research labs, if not in medicine yet. Some of them are extensions of the technologies described above, while others are firmly in the WTF, ARE YOU KIDDING ME territory. If you want to be notified when Part 2 is finished, subscribe below.

Also possibly by glial cells - there are non-neuron cells in the brain, we don’t understand them very well. In general, everything I say about the brain here is a vast oversimplification

Yes, like Inception, but without the heist. Unless the thoughts you implant are heist-related.

Or even dendrites, though it’s not clear that we would need to.

We don’t necessarily understand the limits of replicability at a high scale.

I have an example in mind of blatant fraud in the field that went unpunished. Since such things are controversial, I don’t want to contaminate this post with it, but if there’s interest, I can write about it separately.

There are still mysteries! The claustrum, for example, is so small and so deep that we can’t study it very well. But it seems to be really important for consciousness!

Not very well, though. The linked study claims 96% accuracy in the abstract, but they only had subjects say 5 phrases. We are quite far from full speech decoding, I don’t think MEG will get us there.

There’s also tACS, but for the purposes of this article, it’s not that different from tDCS.

excellent post, sir.

i'd love to see a comparison follow-up detailing some of the technology's *known* advancements over the course of the last 13+ months.

i staunchly believe that "we" are already reaching the realms of stage 3. it's just not a fact that very many people are privy to, so to speak.